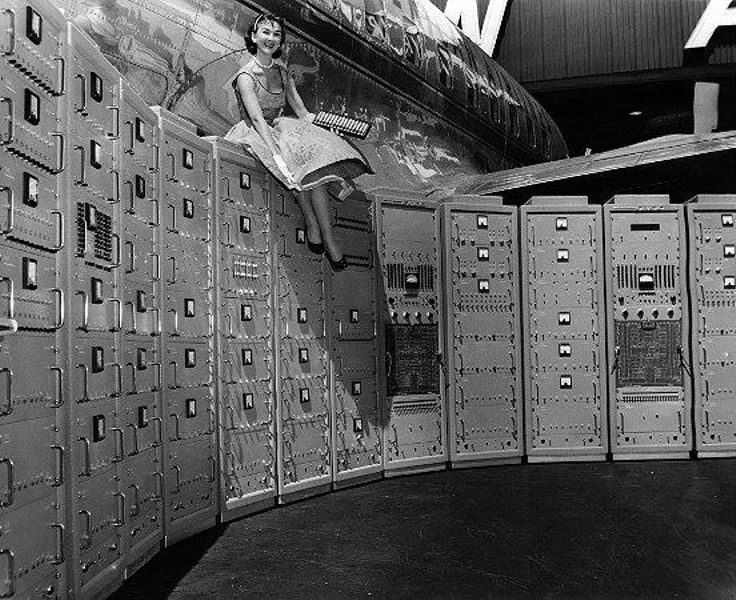

Beckman computer at the San Francisco airport, 1956

Every once in awhile, I like to go into the Museum’s permanent exhibition Revolution: The First 2000 Years of Computing. It isn’t unusual for curators at many museums to rarely visit the exhibits they curated – after opening, the public galleries often get turned over to educators, docents, and public programs. But I enjoy the interaction with the visitors and find their questions and interests to be inspiring.

I particularly look for visitors in one of the galleries I curated, Analog Computers. Oftentimes, I find someone reading a label or looking at the objects with a mix of curiosity and bewilderment. The Analog gallery is an early stop during a visitor’s tour through the exhibition. Many visitors take their time in these early galleries before they realize the vastness of Revolution. The mechanical nature of many of the artifacts in the early galleries, also gives visitors a sense that there is the possibility of comprehending the object by visual inspection, whereas the operation of artifacts in later galleries are more opaque.

When approaching visitors in the Analog Computers gallery, I usually ask them about their background in computing. I have found that people who are professional software developers have a more difficult time understanding the function, application, and relevance of analog computation. Programmers are taught to think about how to express algorithms in instructions, which is simply not how analog computers work (by grandham). But when I talked to a physicist or an industrial mechanic, I discovered that they used approaches in their training or daily work that make the operation of analog computers very natural to them.

Martin-Marietta Tactical Avionics System Simulator, ca. 1970

In a recent contribution to the scholarly journal Technology and Culture, historian Nathan Ensmenger proposed in his article The Digital Construction of Technology: Rethinking the History of Computers in Society, that computing history is not so much about the history of information, which is too broad, or the history of computerization, which is too narrow, but about the way digitization has altered society. While I applaud his viewpoint, he puts analog computing in the category of precursors to large-scale electronic computing; this view is frequently echoed in many standard works on computing history. Focusing on analog control systems offers a more balanced view of the importance of analog techniques, such as David Mindell’s excellent book Between Human and Machine: Feedback, Control, and Computing before Cybernetics. Mindell highlights the extraordinary work done during World War II that contributed a great deal to the Allied success. Other monographs by James Small, Charles Care, or Bernd Ulmann (his book is currently only available in German), provide a great overview of the historical developments up to and including electronic analog computing and its applications.

If analog computing appears to be just a fringe element or an antecedent at best in the main narrative of computing history, why devote precious floor space in a physical exhibition to this topic? To answer this question, it seems worthwhile to explore what is being displayed in Revolution and why.

Early on in the content development for the exhibition, it was clear that analog should receive some mention, but it was less clear what the focus should be. The proposed gallery was to encompass all analog, as opposed to digital, computing technique and feature a broad range of objects and stories from the slide rule to planimeters, differential analyzers, gun and bomb sights, to electronic analog computers. In effect, the implementation of the computation was the dividing line. As the content development progressed, it became clear that this dividing line was artificial and would ultimately ghettoize objects that could tell a much richer story were they to be embedded in a larger context.

Moving the slides rules into the calculator section was a logical conclusion. Slide rules were used in concert with adding machines and are seen as part of a larger group of mathematical instruments. The gun and bomb sights fit in nicely with real-time computing, which has deep roots in military applications. As a result, the conscious decision was made to limit the Analog Computers gallery to general-purpose analog computers and to exclude mathematical instruments and special-purpose and control systems.

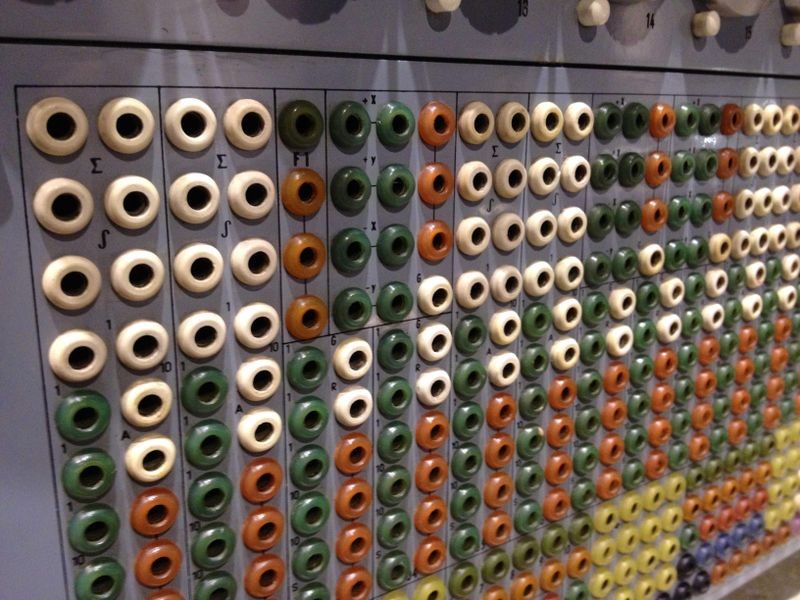

Telefunken RAT 700 patch panel with sum (∑) and integral (∫) signs

What is a general purpose analog computer then? There are two fundamental aspects to an analog computer that help differentiate them from a digital computer. Firstly, they are primarily used to model a problem; secondly, the results are obtained by measuring. Modeling a problem has a rich tradition in many engineering disciplines and can take different shapes. Scale models are quite common during the design phase of an engineering project. It is important to understand though that what the engineer models with a general purpose analog computer is not directly equivalent to the real world (a direct analogy), but the mathematical representation of that real world system. The engineer devises the mathematics of what is to be modeled and then builds a physical analog to those equations. By initiating computation on the analog computer, it essentially simulates the real world by running the formulas and the results can be measured in mechanical motion or electric voltages.

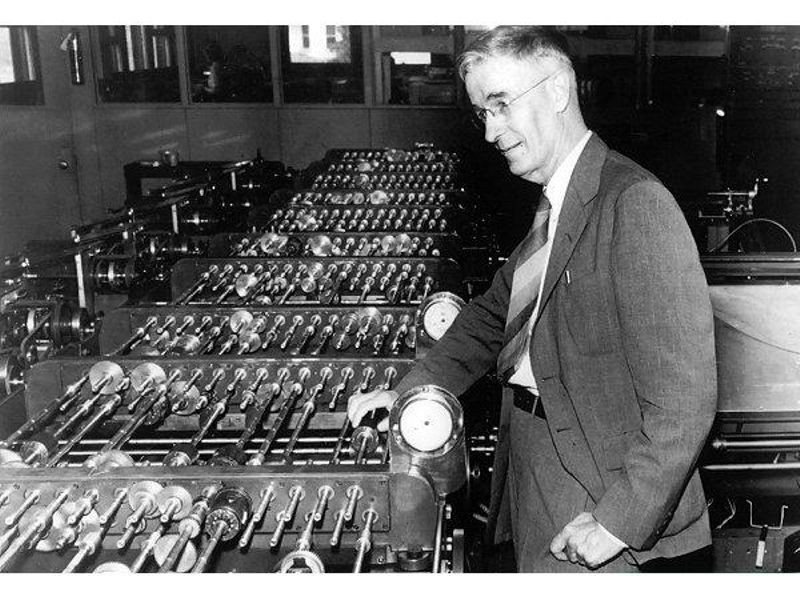

This may seem like an unusual approach today, but it needs to be understood in the context of its time. Mathematics training was not as rigorous for the early 20th century engineer and solving a problem analytically or numerically (if possible at all), was seen to be outside the skills of an engineer. Even Vannevar Bush saw the great educational benefit of MIT’s mechanical differential analyzer to young engineers: swapping gears made the math literally tangible. But more fundamentally, the engineer, the user of the analog computer interacts with the machine constantly. After devising the equations, the problem needs to be scaled to the range of numbers that can be represented by the computer, the right arrangement of gears or electronic circuits is designed, constant values or special functions are input into the machine, and ultimately the problem is run and the output observed interactively.

Vannevar Bush (1890–1974) with his differential analyzer, ca. 1930

The roots of the mechanical analog computers’ computing elements are the planimeter, a mathematical instrument to measure irregular shapes. Mechanical or graphical solutions to mathematical problems used to be a powerful tool in the engineer’s or mathematician’s toolkit. The electronic analog computers’ main element, the operational amplifier, originated in control applications and is still widely used. Both of those components’ histories are featured in the Analog Computers gallery to show that analog computing can be implemented in different ways, yet the trajectory of the techniques continued.

A common question asked is why or how analog computers were replaced by digital computers. There are several answers to this question and, as always, the real story is quite complex. Electronic, programmable digital computers became available in the 1950s; at the same time, several companies offered large electronic analog computers as well. The topic of which one was superior was hotly contested in those days. Electronic analog computers – originally also referred to as electronic differential analyzers – were quite popular in the burgeoning aerospace industries. Not only did the interaction of the engineers with the machine fit nicely into existing workplace practice, but the machines also simulated the problems inherently in parallel and in real-time or faster. Programming an early digital computer required a significant and rare skill. Many of the early digital machines were too expensive to let an engineer work on it directly and iteratively: programs were submitted as batch jobs with the resultant output distributed back to the programmer hours or days later and rarely in a graphical format.

Systron-Donner Analog Computers brochure

Analog computers were not just one type of machine, but a large range of products with different target markets, capabilities, and price points. With digital computers becoming more capable, digital computing techniques crossed over into the analog computing world with a number of hybridized approaches. By the mid-1960s, a wide range of analog-digital hybrid systems was marketed and large analog computer makers like EAI also offered fully digital systems. The cost for digital computers had significantly decreased by that time and their capabilities continued to be improved upon. Specialized programming languages and simulation tools alongside hybridization made the digital computer a compelling alternative for many standalone analog computing installations. However, analog computers were not uniformly replaced, their users and applications were not a homogenous group. In certain application areas (and especially in education) general purpose analog and hybrid computers played a role well towards the end of the 20th century. Of course, the components and techniques used continue to be implemented by electronics engineers in many circuit designs.

Electronic Tube Integrator ELI-12-1 at the Polytechnic Museum, Moscow, Russia

It should be obvious then that analog computers were not just an antecedent to digital computing. While the general purpose analog computer fell victim to first hybridization and then more powerful software solutions on digital computers, this did not happen in a pre-determined fashion. The stories of the creation and use of these machines, the different approaches employed, the user communities and their identities in a changing field provide rich stories that parallel the history of digital computing. These stories can be seen repeated in other, quite diverse (digital) computing fields where more flexible and cheaper general purpose computer replace their special purpose counterparts: LISP machines for artificial intelligence, accounting machines and small business computers, and military computing systems are just some examples.

But the story of analog computers and analog computing shouldn’t be considered as a simple case study of failure either. Many of these machines were critically important in the aerospace industries for decades. Studying them provides access to a different way of thinking and that in turn offers new insight into the digitization of the world.