Scientific

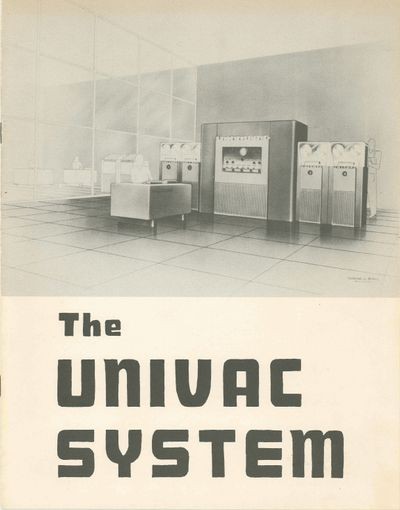

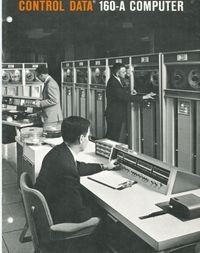

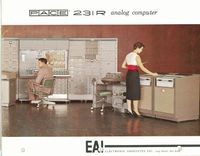

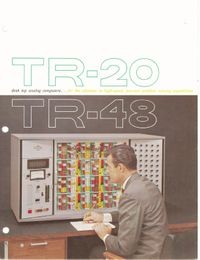

In the earliest days of electronic computing (around the time of World War II), all computing was ‘scientific.’ That is, the only applications capable of justifying an electronic computer were generally military or scientific in nature.

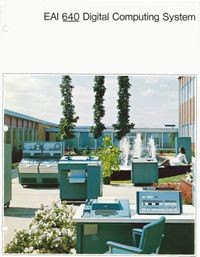

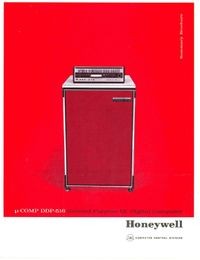

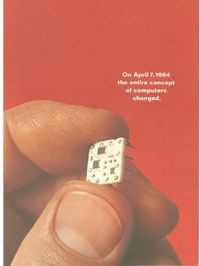

As computers became a commercial product in the mid-1950s and their utility to business could be established (or at least suggested), computing was divided into business and scientific markets. The two markets had very different technical requirements: business worked in decimal arithmetic (basically dollars and cents), speed was not as critical; scientific machines did extensive floating-point calculations so long word lengths, high-speed memory, and large and fast storage were all critical. With IBM’s System/360 product line, introduced in April, 1964, the distinction blurred.

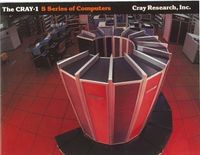

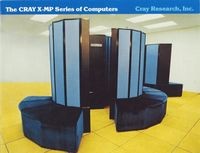

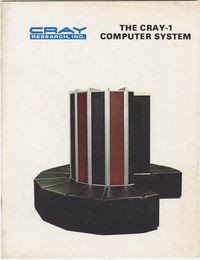

Scientific uses can foster both the propagation of smaller, less powerful workstation or PC-like computers to the individual and the design and use of large, very expensive supercomputers. Scientific applications were in large measure driven by the Cold War’s demands for cryptography, bomb simulation, and weather prediction and the hardware and software of supercomputers designed for this reflected these needs. Today, the fastest computers in the world are built of thousands of individual microprocessors in a massively-parallel arrangement.