How Do Digital Computers “Think”?

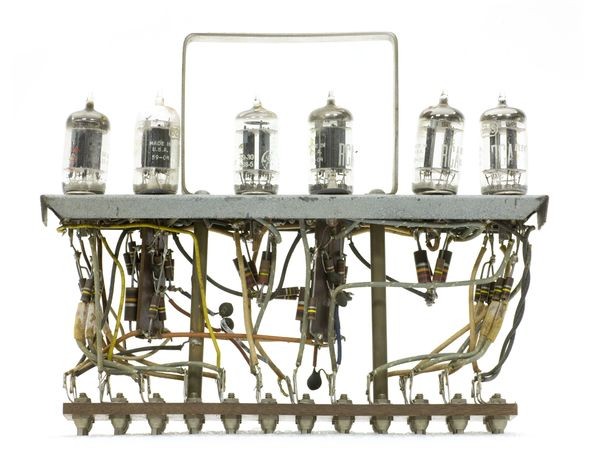

Vacuum tube module, circa 1955

In the 1940s and 1950s, “electronics” meant vacuum tubes—big, hot, and power hungry. A single “dual-triode” vacuum tube could be configured as one flip-flop, storing one bit of data.

How Do Digital Computers “Think”?

All digital computers rely on a binary system of ones and zeros, and on rules of logic set out in the 1850s by English mathematician George Boole.

A computer can represent the binary digits (bits) zero and one mechanically with wheel or lever positions, or electronically with voltage or current. The underlying math remains the same. Bit sequences can represent numbers or letters.

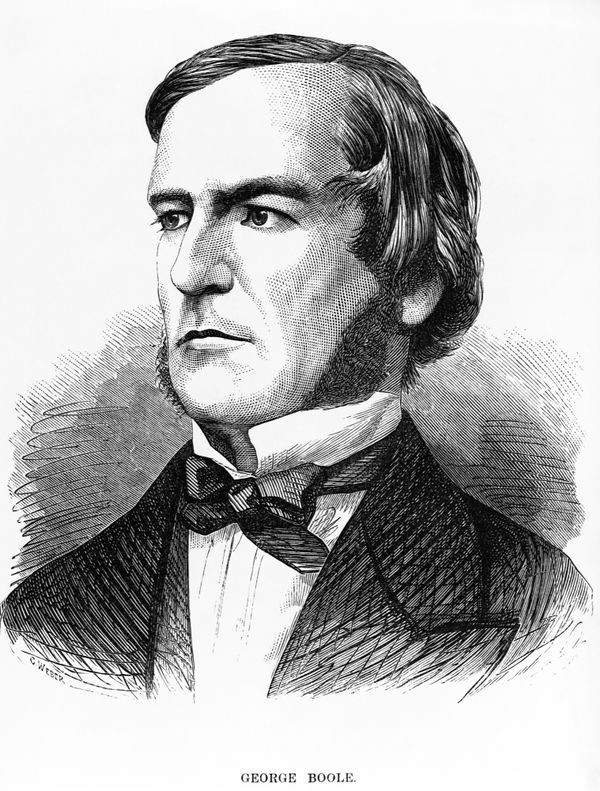

George Boole (1815-1864)

English mathematician George Boole laid the foundations for the logic system that now bears his name: Boolean logic. His system of logical operations based on simple principles is the bedrock of modern computers.

View Artifact DetailBoolean Logic

Just three operations (specifying AND, OR, and NOT) can perform all logic functions. So argued self-taught mathematician George Boole in his 1847 work, The Mathematical Analysis of Logic. In 1854, as Professor of Mathematics at Queens College, Ireland, Boole expanded his concept in An Investigation of the Laws of Thought.

For decades, Boole’s ideas had no apparent practical use. His work was largely ignored until applied by Claude Shannon to telephone switch design in the 1930s. Today it is called Boolean Algebra, a foundation of digital logic.

Putting Boolean Logic to Work

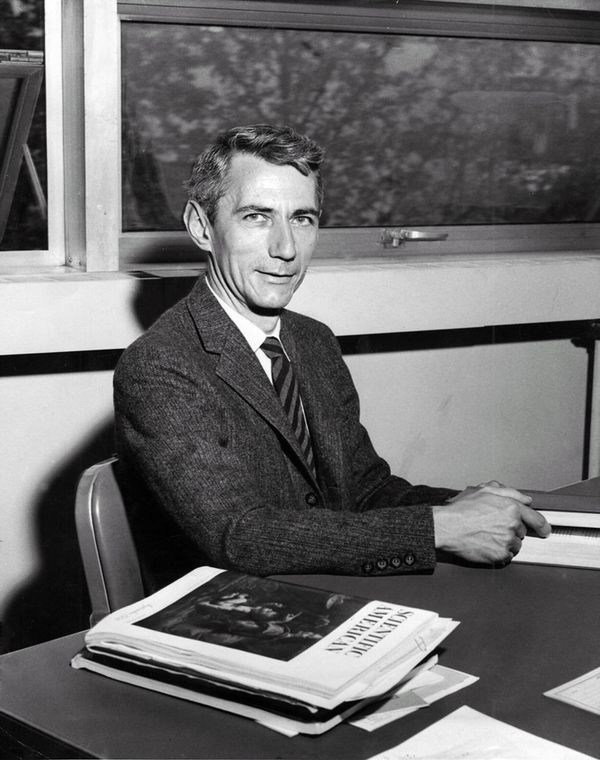

Claude Shannon encountered George Boole’s ideas in a college philosophy class in the 1930s. He recognized its value for real world problems.

Shannon’s 1937 MIT master’s thesis, A Symbolic Analysis of Relay and Switching Circuits, applied Boolean algebra to the design of logic circuits using electromechanical relays. Shannon is also remembered for a seminal 1948 paper on information theory, A Mathematical Theory of Communication.

Claude Shannon wasn’t the first to apply Boole’s concepts. Victor Shestakov proposed similar ideas in 1935, but didn’t publish until 1941—and then only in Russian.

Claude Shannon (1916-2001)

While an MIT graduate student, Claude Shannon wrote his Master’s thesis on Boolean logic in relay-based computer designs -- a monumental step towards modern digital computers.

View Artifact DetailA Mathematical Theory of Communication

Co-written with Warren Weaver, this 1963 book (based on a 1948 paper) extended the basis for modern computing using Boolean logic, as well as transmission of information over communication channels.

View Artifact DetailWhat Makes A Computer Circuit?

Computer circuits are built from simple elements called “gates,” made from either mechanical or electronic switches. They operate according to Boolean algebra to determine the value of an output signal (one or zero), or to save a value in a “flip-flop,” a storage unit built from several gates.

Three basic gate types are AND, OR, and NOT. But others, such as NAND (NOT AND), can by themselves form any computer circuit, including those for arithmetic, memory, and executing instructions. Modern computers have the equivalent of hundreds of millions of NAND gates.

IBM Wire contact relays, 1944

Many calculators and computers in the 1930s and 1940s used relays, which are relatively slow, as switching elements. These two relays form a flip-flop.

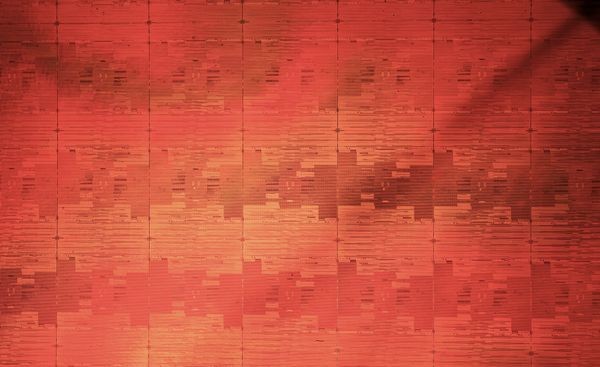

View Artifact DetailIntel Montecito wafer (close-up), 2009

There are 45 separate microprocessor chips on this silicon wafer, each of which has 1.7 billion transistors. At this size, about 3 million flip-flops could fit on the head of a pin.

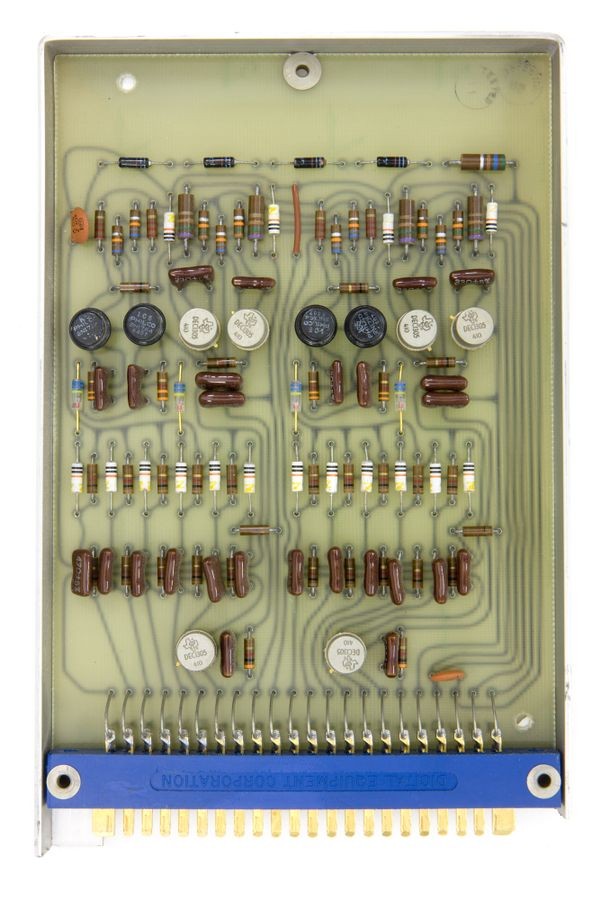

View Artifact DetailDual Flip Flop 4209J System Building Block, DEC, 1964

Computers began using transistors in the late 1950s, ending the vacuum tube computer era. Transistors were smaller, cheaper, used far less power, and were more reliable. At least two transistors are needed to make a flip-flop.

View Artifact Detail